It has been around for almost 30 years, and still shows no signs of retiring. Java was there when the web was taking its first steps, and has accompanied it throughout the decades. It has steadily changed, evolving based on the protean needs of internet users and developers, from the early applets to today’s blockchain and Web3. We can only imagine where it will be 30 years from now.

In this four-part retrospective, written in collaboration with Danilo Ventura, Senior Software Engineer at ProActivity (our sister company part of Fortitude Group), we attempt at tracing the history of Java and the role it has played in the development of the web as we know it. The aim is to identify the reason why Java succeeded in lieu of other languages and technologies, especially in the early hectic, experimental days of the internet. That was when the programming language and the web were influencing each other, to fully unlock the potential of the technology that has changed the world forever.

It all started in the mid 1990s. It was the best of times, it was the worst of times. The World Wide Web was still in its infancy and was not readily accessible by the general public. Only tech-savvy enthusiasts connected their computers to the internet to share content and talk with strangers on the message boards.

The birth and development of the web had been made possible by the creation and development of a simple protocol called HTTP (Hypertext Transfer Protocol), first introduced by Tim Berners-Lee and his team in 1991 and revised as 1.0 HTTP five years later. Since then the protocol has continuously evolved to become more efficient and secure – 2022 saw the launch of HTTP 3.0 – but the underlying principles are still valid and constitute the foundation for today’s web applications.

HTTP works as a straightforward request–response protocol: the client submits a request to the server on the internet, which in turn provides a resource such as a document, content or a piece of information. This conceptual simplicity of HTTP has ensured its resilience throughout the years. We can see a sort of Darwinian principle at play, by which only the simple, useful and evolvable technologies pass the test of time.

The documents exchanged between computers via the HTTP protocol are written in HTML, i.e. HyperText Markup Language. Since its introduction in 1991, HTML has been used to describe the structure and content of web pages. At first, these were crude text documents with some basic formatting, such as bold and italic. Later on, the evolution of HTML and the addition of accompanying technologies such as CSS enabled more formatting and content options, such as images, tables, animations, etc..

In order to be accessed by human readers, HTML web pages need to be decoded by a web browser, namely the other great technology that enabled the birth and development of the internet. Browsers were created as simple programs capable of requesting resources via the HTTP protocol, receiving HTML documents and rendering them as a readable web page.

At the time the Web was primitive, with very few people accessing it for leisure or work. It is reported that in 1995 only 44M people had access to the internet globally, with half of them being located in the United States (source: Our World in Data). Business applications were scarce, but some pioneers were experimenting with home banking and electronic commerce services. In 1995, Wells Fargo allowed customers to access their bank account from a computer, while Amazon and AuctionWeb – later known as eBay – took their first steps in the world of online shopping.

The main limiting factors to the web’s democratization as a business tool were technological. Needs were changing, with users reclaiming an active role in their online presence. At the same time, website creators wanted easier ways to offer more speed, more flexibility, and the possibility to interact with an online document or web page. In this regard, the introduction of Java was about to give a big boost to the evolution of the online landscape.

The first public implementation of Java was released by Sun Microsystems in January 1996. It was designed by frustrated programmers who were tired of fighting with the complexity of the solutions available at the time. The aim was to create a simple, robust, object-oriented language that would not generate operating system-specific code.

That was arguably the most revolutionary feature. Before Java, programmers wrote code in the preferred language and then used an OS-specific compiler to translate the source code into object code, thus creating an OS-specific program. In order to make the same program compatible with other systems, the code had to be rewritten and retranslated with the appropriate compiler.

Java instead allowed programmers to “write once, run everywhere” – that was its motto. Developers could write code on any device and generate a metalanguage, called bytecode, that could be run on all operating systems and platforms equipped with a Java Virtual Machine. It was a game changer for web developers, for they did not have to worry anymore about the machine and OS running the program.

This flexibility guaranteed Java’s success as a tool to create multi-OS desktop applications with user-friendly interfaces. Supported by the coeval spread of the first mass OS for lay people (Windows 95), it helped the codification of the classic computer program visual grammar, still relevant today. Java also became one of the preferred standards for programs running on household appliances, such as washing machines or TV sets.

The birth of applets can be seen as a milestone in the development and popularization of Java. These were small Java applications that could be launched directly from a webpage. A specific HTML tag indicated the server location of the bytecode, which was downloaded and executed on the fly in the browser window itself.

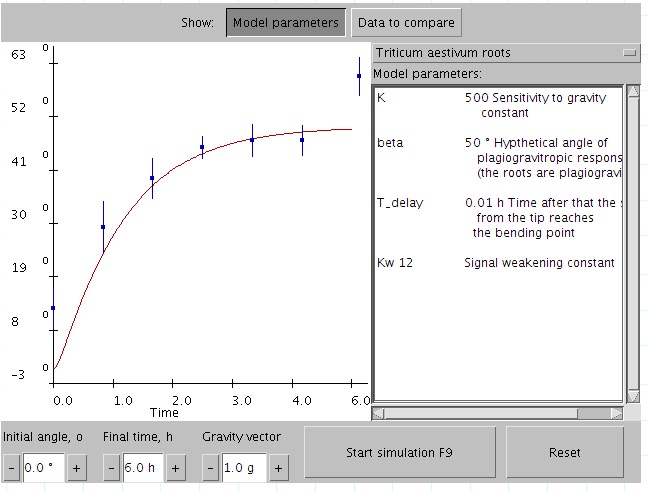

Applets allowed a higher degree of interactivity than HTML, and were for instance used for games and data visualization. The first web browser supporting applets was Sun Microsystem’s own HotJava, released in 1997, with all major competitors following soon thereafter.

Java Applets were pioneering attempts at transforming the web into an interactive space. Yet, they had security issues that contributed to their gradual demise in the early 2010s, when all major browsers started to terminate the support for the underlying technology. One of the last great applets was Minecraft, which was first introduced as a Java-based browser game in 2009. Java Applets were officially discontinued in 2017.

We can say that the goal of making HTML web pages more interactive has been fully achieved thanks to JavaScript, another great creation of the mid-nineties. Yet, despite the name, it has nothing to do with Java, apart from some similarities in the syntax and libraries. It was actually introduced in 1995 by Netscape as LiveScript and then rebranded JavaScript for marketing purposes. It is not a programming language, but rather an OOP scripting language that runs in a browser and enhances web pages with interactive elements. JavaScript has now become dominant, being used in 2022 by 98% of all websites (source: w3techs).

At the same time, another Java technology, RMI (Remote Method Invocation), and later RMI-IIOP (RMI on Internet Inter-Orb Protocol), enabled distributed computing based on the Object Oriented paradigm in a Java Virtual Machine. In the early 2000s, it was possible to develop web applications with Applets that, thanks to RMI-services, could retrieve data from a server, all based on JVMs.

The next step in the evolutionary path were Java servlets, which paved the way for your typical Web 1.0 applications. Servlet allowed the creation of server-side apps interacting with the HTTP protocol. That means that the browser could request a resource to the server, which in turn provided it as a HTML page. It was finally possible to write server-side programs that could interact with web browsers, a real game changer for the time. As servlets’ popularity increased, that of Applets started to wane, for it was easier to adopt pure HTML as User Interface and build server-side web pages.

You can also read the second, third and forth parts. Follow us on LinkedIn and stay tuned for upcoming interesting technology articles on our blog!

Thanks to Danilo Ventura for the valuable contribution to this article series.